They need to align delivery expectations with required levels of service availability and performance for both external and internal customers (SLA/OLA). Optimizing Apache Kafka PerformanceĪpache Kafka is a Big Data platform operating distributed applications running on large clusters of hardware so chances are big for down time and data motion interruptions. Kafka users, having a complex IT environment, must-have a clear end-to-end monitoring and performance view of their business key performance metrics. It is horizontally scalable, fault-tolerant, wicked fast, and runs in production in thousands of companies.Īdditionally, Kafka connects to external systems (for data import/export) via Kafka Connect and provides Kafka Streams, a Java stream processing library.

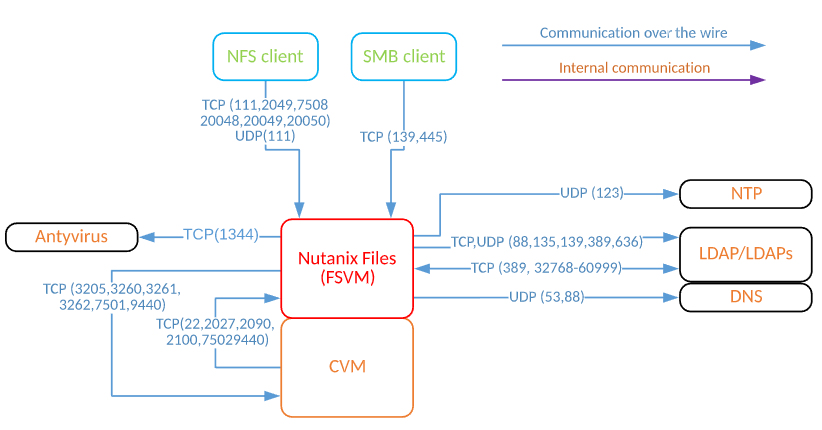

#Running kafka on nutani software#

OverviewĪpache Kafka is an open-source stream processing platform developed by the Apache Software Foundation written in Scala and Java. Kafka is used for building real-time data pipelines and streaming apps. In this case, the empty shelf would not be filled up and the retailer would be unprofitable.

There’s a potential risk of loosing money if it’s not identified in time that the Kafka stream doesn’t work and doesn’t transfer the message that the retailer is out of stock. Apache Kafka is a popular platform used by big-box retailers as they need big-data platforms to support their activity. In retail chain stores it’s common to have RFID sensors on the shelves, that need to send data to the warehouse and make an order for the missing stock. Marty Pejco, COO Centerity with Lawrence Wilcox, Director of Strategic Alliance, NutanixĬenterity can capture all performance and operational metrics from the Application, OS and Infrastructure Layers, correlating these metrics across all domains to provide smart impact, alerting and root cause analysis. Furthermore, if multiple systems or architectures are involved, Centerity’s visualization tools can simplify the operations of these complex environments in immediately intuitive ways. While converged and hyperconverged architectures will speed deployments, these by themselves lack comprehensive performance and operational analytics at the Application and OS layers, where 90% of failures occur. This is where Centerity comes in. Marty also met our long-time partners Dell-EMC (Vblock, VxRail, Vision), Cisco (FlexPod), Nutanix and Pure Storage (FlashStack).

Last month in Las Vegas, Marty Pejko met with SAP experts and community leaders to discuss how Big Data (HANA, Hadoop) customers can capture critical performance and operational analytics to help maximize the return on its investments.